TL;DR

Six major AI companies -- Anthropic, OpenAI, Google, AWS, Microsoft, and Salesforce -- co-founded the Agentic AI Foundation (AAIF) in 2026, choosing MCP as the universal open standard for agent tooling. OpenClaw was already MCP-native before the foundation existed, making it the most naturally aligned framework. Rapid Claw deploys AAIF-compliant OpenClaw agents in 60 seconds.

In March 2026, Anthropic, OpenAI, Google, AWS, Microsoft, and Salesforce co-founded the Agentic AI Foundation (AAIF). The founding announcement unified the industry around three open specifications: MCP (Model Context Protocol) as the universal tool and context layer, OpenAI's AGENTS.md as the agent behavior specification, and Block's Goose project as the reference implementation. The open-standard war for agentic infrastructure just ended. The industry picked MCP. Here is what that means for anyone building or deploying OpenClaw agents.

To understand why this is significant, consider the counterfactual. Six months ago, each major lab was quietly building proprietary tooling layers for its models. Anthropic had MCP, but adoption required a bet on Anthropic's roadmap. OpenAI had its own function-calling conventions. Google had Vertex AI extensions. AWS had Bedrock agents. Enterprises evaluating agentic infrastructure faced fragmented stacks with no clear convergence point. The AAIF announcement changes that calculus entirely. When the five largest AI infrastructure providers co-sign a single protocol, vendor lock-in risk drops to near zero and the ecosystem of connectors, integrations, and tooling starts compounding immediately.

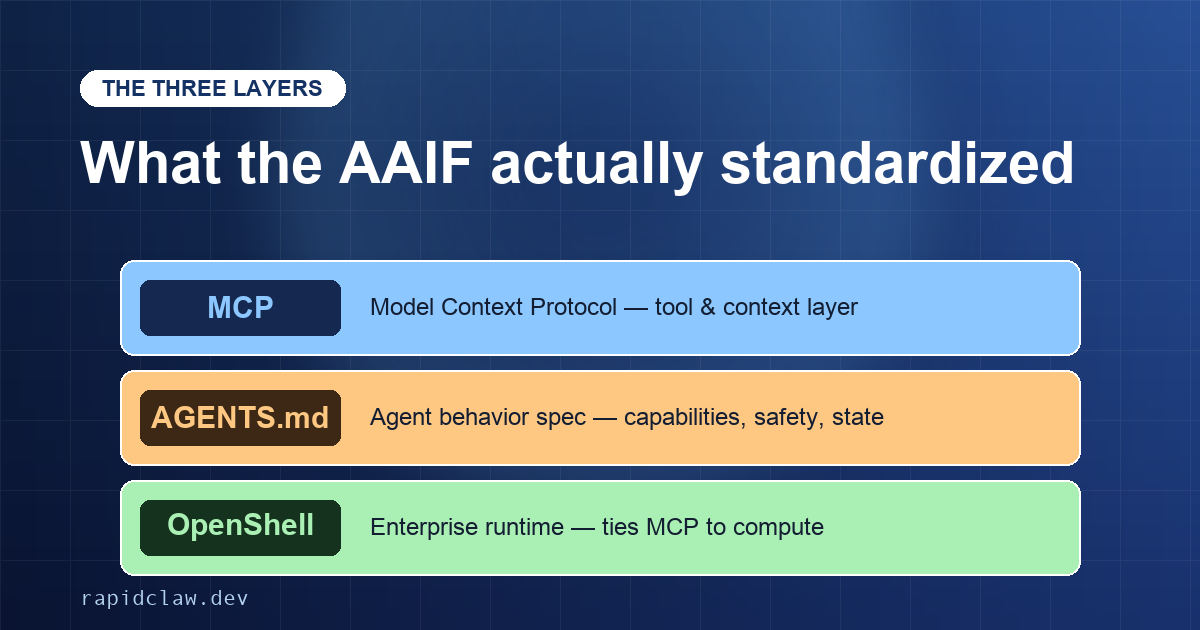

What the AAIF standardized: three layers

MCP (Model Context Protocol) -- the universal tool and context layer for agent-to-tool communication.

AGENTS.md -- the agent behavior specification defining capabilities, error handling, and safety constraints.

OpenShell -- the enterprise runtime that ties MCP tool calls to the underlying compute layer.

What the AAIF standardized

The AAIF specification covers three layers. MCP is the universal tool and context protocol — the way an agent discovers what tools are available, requests context from external systems, and returns structured results. Think of it as HTTP for agents: a well-defined request/response contract that any compliant runtime can implement. AGENTS.md defines agent behavior: how agents describe their capabilities, how they handle errors, how they report state, and what safety constraints they honor. OpenShell, announced at NVIDIA's GTC as the enterprise runtime, provides the execution environment that ties MCP tool calls to the underlying compute layer.

The practical result is that all major clouds now treat OpenClaw as a first-class deployment target. AWS Bedrock, Google Vertex, Azure AI, and NVIDIA's enterprise stack each expose an AAIF-compliant endpoint that an OpenClaw agent can call through MCP. A connector written once against the MCP spec works across all of them without modification.

What this means for developers

Protocol-level portability, defined

MCP describes how agents discover tools and exchange structured context. Because all six AAIF founders implement the same wire protocol, an agent written against MCP today runs on AWS Bedrock, Google Vertex, Azure AI, and Anthropic's first-party infrastructure without code changes — only the deployment target moves.

Before AAIF, building an OpenClaw agent for enterprise deployment meant making a series of bets. Which cloud would your client standardize on? Which model provider would have the best uptime in 18 months? Which tool-calling convention would survive? Each of those bets had compounding risk: a wrong call at the protocol layer meant a rewrite later.

The AAIF collapses that decision tree. You can build an OpenClaw agent against the MCP spec today and deploy it to any AAIF-compliant platform — including platforms that do not exist yet. The ecosystem of MCP-native tools, connectors, and integrations is growing quickly: browser automation, database access, email, calendar, document editing, and code execution are all available as MCP servers today. That number will compound as adoption increases. An agent built now benefits from every new MCP connector that ships in the next two years.

Future-proofing your agent investment

Building against the MCP spec today means your agent automatically gains access to every new connector, integration, and platform that adopts the AAIF standard. The compounding effect of ecosystem growth applies retroactively to agents already deployed.

MCP-native, managed for you

Rapid Claw runs OpenClaw with the MCP layer wired up out of the box. Two-founder shop, Bali-based. $29/mo to start. Skip the standards-tracking headache.

The OpenClaw position

OpenClaw was MCP-native before the AAIF existed. Anthropic designed MCP alongside OpenClaw, which means the integration is not a retrofit — the agent runtime, the tool-calling layer, and the context protocol share a coherent design. When the AAIF standardized MCP, it effectively ratified the architecture that OpenClaw was already built on.

That history matters for adoption. OpenClaw does not need to migrate to AAIF compliance — it was already compliant. For developers evaluating which agentic framework to build on in 2026, this removes a major risk factor. The March 2026 OpenClaw update with Clawdhub and sub-agents further reinforced that alignment by shipping features designed around MCP-native tooling. OpenClaw is not a niche tool that got lucky when the standard matched its architecture. It is the framework that helped define the standard, now backed by every major infrastructure provider in the industry.

What it means for managed hosting

As the AAIF ecosystem matures, the number of available MCP connectors will increase sharply. More integrations mean more surface area. A browser automation connector, a database MCP server, a Salesforce integration, and an email server each introduce their own update cadences, authentication requirements, and compatibility considerations. When a new MCP spec revision ships — and revisions will ship — every connector in your agent's dependency graph needs to be tested and updated.

Self-hosted OpenClaw deployments absorb that maintenance burden directly. Managed hosting providers handle it as part of the service. As the ecosystem grows, the gap between the two options widens: more integrations means more potential failure points, and managed hosting means those failure points are someone else's responsibility. For teams whose core competency is not agentic infrastructure, this tradeoff becomes more consequential with every new MCP connector that ships.

Rapid Claw tracks AAIF spec revisions and new MCP connectors on its public roadmap — so teams building on managed hosting can see exactly which integrations are landing next, and when.

The maintenance burden grows with the ecosystem

Every new MCP connector adds another dependency with its own update cadence, authentication flow, and compatibility requirements. Self-hosted teams must track and test each one against every AAIF spec revision. This cost scales linearly with the number of integrations your agents rely on.

NVIDIA's NemoClaw

NVIDIA announced NemoClaw at GTC as its enterprise OpenClaw stack. NemoClaw adds security tooling, compliance controls, and GPU-optimized inference on top of the standard OpenClaw runtime. It is designed for organizations with existing NVIDIA data center investments — H100 fleets, DGX systems, or Nvidia Cloud deployments — that also need audit trails, data residency controls, and SOC 2 or HIPAA compliance documentation.

NemoClaw is a serious product for a specific market: large enterprises with both GPU infrastructure and compliance requirements that rule out consumer AI services. For teams that fit that profile, it is worth evaluating. For everyone else — startups, agencies, small-to-mid engineering teams — the deployment complexity and GPU dependency add overhead that does not match the use case. Standard OpenClaw on a managed platform handles the agent runtime without requiring a procurement process or a GPU fleet.

Where Rapid Claw sits

Rapid Claw is MCP-native and AAIF-compliant. OpenClaw agents deploy in 60 seconds and are live on infrastructure that handles platform updates as the standard evolves. When AAIF ships a revision to the MCP spec, Rapid Claw updates the runtime — no action required on the developer side.

Pricing is $29/mo (credit card required), which includes $20 in API credits. Smart routing automatically directs simple tasks to lighter models and reserves heavier models for tasks that need them — the same routing logic that meaningfully reduces AI costs relative to unmanaged deployments. When token usage exceeds the included allocation, the agent throttles and notifies; it does not generate surprise overages. For developers who want to be on the right side of the AAIF standard without building their own AAIF-compliant infrastructure, that is the practical path.