Agent Engineering · 16 min read · Published April 20, 2026

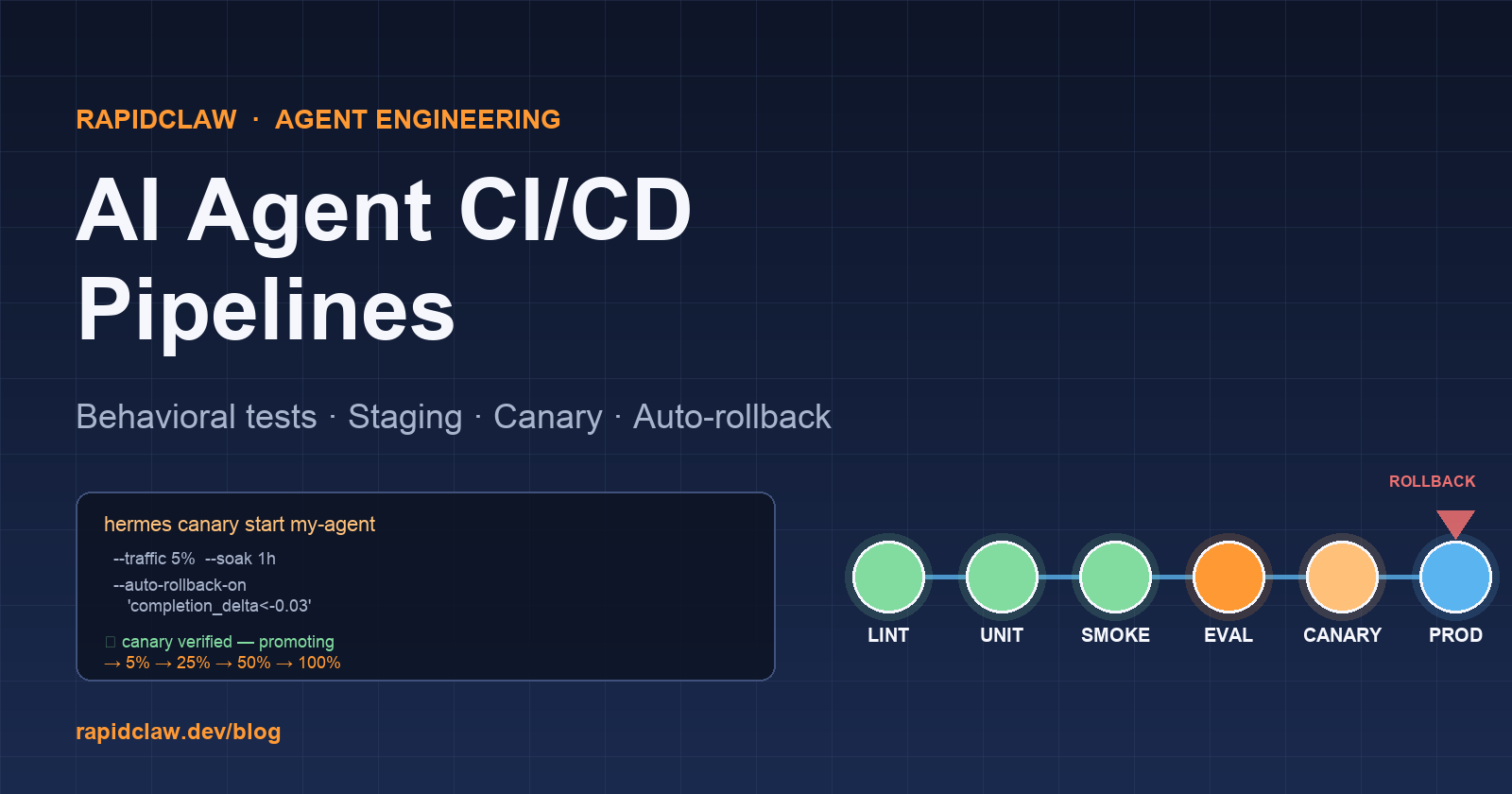

AI Agent CI/CD Pipeline: Shipping Agents Safely

Traditional CI/CD pipelines assume deterministic software — if the tests passed, the code works. Agents are stochastic, tool-coupled, and their behavior drifts with every prompt and model change. Here is the complete playbook for building an AI agent CI/CD pipeline that catches regressions before they reach users.

Why agent CI/CD is different

A traditional web-service pipeline is a pyramid: unit tests, a handful of integration tests, maybe a few smoke tests on a staging URL, then a rolling deploy. The whole scheme assumes that a passing test suite implies a working service. Agents break that assumption in four ways.

First, behavior is stochastic. The same prompt can produce different tool-call sequences across runs. A unit test that asserts "the agent calls search() exactly once" will flake — not because the agent is broken, but because reasoning paths vary. You have to gate on distributional behavior, not single-run behavior.

Second, inputs are unbounded. Your users will type things your test set never covered. Coverage as a percentage of source lines is meaningless; what matters is coverage of intents, tool-profiles, and failure modes. A CI run that hits 95% line coverage on the agent framework code but only 12 task categories in the eval set is telling you nothing useful about whether to ship.

Third, the model under the hood is a dependency you don't control. When Anthropic or OpenAI updates their model — or when you upgrade your self-hosted checkpoint — your agent's behavior can change in ways nothing in your repo reveals. CI has to re-run the full eval on model bumps, not just code bumps.

Fourth, a broken agent costs real money, silently. A bad deploy of a stateless web service throws 500s you'll notice in minutes. A bad agent deploy keeps running, keeps billing tokens, and produces outputs that look plausible until a customer complaint three days later. Your pipeline must gate on cost and trajectory shape, not just on error rate.

The result: agent CI/CD needs behavioral tests, staging environments that are indistinguishable from prod, canary deployments, automated rollback, and cost/quality gates at every stage. Below is how we build that at RapidClaw.

The stages of a real agent pipeline

Our reference pipeline has six stages. Each stage has a pass/fail gate, and a failed gate blocks promotion to the next. This is the same shape for both OpenClaw (Python) and Hermes (YAML) agents, just with different runners.

- Lint & static analysis — schema-validate config, type-check Python, scan prompts for known anti-patterns.

- Unit + contract tests — mock the LLM, assert tool-call shapes and error handling. Deterministic, fast, under 90s.

- Smoke eval (20–30 tasks) — real model, no mocks, gate on completion rate drop > 5 points from last green run.

- Full behavioral eval (300+ tasks) — stratified by difficulty and category, gate on p95 latency, cost median, and rubric quality.

- Staging canary — deploy to staging, shadow 1% of replayed production traffic for 1 hour, diff trajectories.

- Prod canary → graduated rollout — 5% → 25% → 50% → 100% with automated rollback triggers at every step.

Stages 1 and 2 are cheap (under $1 of compute) and run on every push. Stages 3 and 4 are expensive ($10–$80 per run) and run on merges to main. Stages 5 and 6 only run on explicit deploy triggers. See our agent evaluation benchmarks guide for how to build the eval harness these stages depend on.

Testing agent behavior in CI

Behavioral tests are the single biggest difference between agent pipelines and traditional pipelines. They answer "does the agent still do the right thing for the things we've seen it do wrong before?" — which is the question customers actually care about.

The pattern is stratified, seeded, and distributional:

- Stratified: Your eval set is tagged by category (filing, summarization, code, routing), difficulty (easy / medium / hard), and tool-profile (tool-heavy, reasoning-heavy, memory-heavy). Every report slices on those tags.

- Seeded: Each task runs with a fixed seed for any non-LLM randomness, and a fixed temperature for the LLM. This makes flakes traceable to agent changes rather than RNG.

- Distributional: Run each task 3–5 times. Report the median rubric score, the p95 latency, and the fraction of runs that completed. A single-run eval is a single-sample experiment — useless for gating.

Related reading: AI agent testing in production and debugging AI agents step-by-step.

OpenClaw in GitHub Actions

Here's a complete .github/workflows/agent-ci.yml for an OpenClaw agent. It runs lint → unit tests → smoke eval → full eval, blocks the merge on any gate failure, and uploads the report artifacts for debugging.

# .github/workflows/agent-ci.yml

name: Agent CI

on:

pull_request:

branches: [main]

push:

branches: [main]

concurrency:

group: agent-ci-${{ github.ref }}

cancel-in-progress: true

jobs:

lint:

runs-on: ubuntu-latest

steps:

- uses: actions/checkout@v4

- uses: actions/setup-python@v5

with: { python-version: "3.12" }

- run: pip install -r requirements.txt

- run: ruff check .

- run: mypy openclaw_agent/

- run: openclaw config validate config/agent.yaml

unit:

needs: lint

runs-on: ubuntu-latest

steps:

- uses: actions/checkout@v4

- uses: actions/setup-python@v5

with: { python-version: "3.12" }

- run: pip install -r requirements.txt -r requirements-dev.txt

- run: pytest tests/unit/ -x --maxfail=3

- run: pytest tests/contract/ -x

smoke-eval:

needs: unit

runs-on: ubuntu-latest

timeout-minutes: 10

env:

ANTHROPIC_API_KEY: ${{ secrets.ANTHROPIC_API_KEY }}

steps:

- uses: actions/checkout@v4

- uses: actions/setup-python@v5

with: { python-version: "3.12" }

- run: pip install -r requirements.txt

- name: Run smoke eval (30 tasks)

run: |

python evals/run_eval.py \

--dataset evals/smoke.jsonl \

--out evals/runs/smoke-${{ github.sha }}.jsonl \

--baseline evals/baselines/smoke-main.json \

--gate completion_rate_delta=-0.05 \

--gate latency_p95_max=6.0

- uses: actions/upload-artifact@v4

with:

name: smoke-eval-${{ github.sha }}

path: evals/runs/smoke-${{ github.sha }}.jsonl

full-eval:

if: github.ref == 'refs/heads/main'

needs: smoke-eval

runs-on: ubuntu-latest

timeout-minutes: 45

env:

ANTHROPIC_API_KEY: ${{ secrets.ANTHROPIC_API_KEY }}

steps:

- uses: actions/checkout@v4

- uses: actions/setup-python@v5

with: { python-version: "3.12" }

- run: pip install -r requirements.txt

- name: Run full eval (300+ tasks, 3 repeats each)

run: |

python evals/run_eval.py \

--dataset evals/full.jsonl \

--repeats 3 \

--parallelism 8 \

--out evals/runs/full-${{ github.sha }}.jsonl \

--baseline evals/baselines/full-main.json \

--gate completion_rate_delta=-0.02 \

--gate quality_mean_delta=-0.10 \

--gate latency_p95_max=6.0 \

--gate cost_median_max=0.05

- uses: actions/upload-artifact@v4

with:

name: full-eval-${{ github.sha }}

path: evals/runs/full-${{ github.sha }}.jsonl

The critical bit is the --gate flags. Each gate is a named expression that the eval runner evaluates against the report and the baseline; any failing gate returns a non-zero exit code which fails the job. completion_rate_delta=-0.05 means "block if completion rate drops more than 5 points from the stored baseline for this branch." Baselines are stored as JSON in the repo and updated by a scheduled job that promotes the latest green run on main.

Hermes in GitLab CI

Hermes is declarative — the pipeline just invokes hermes subcommands that already know how to read your eval.yaml and agent.yaml. Here's the equivalent in GitLab CI:

# .gitlab-ci.yml

stages: [lint, test, smoke, eval, canary, promote]

variables:

HERMES_VERSION: "1.14"

.hermes-base:

image: ghcr.io/rapidclaw/hermes-ci:${HERMES_VERSION}

before_script:

- hermes auth login --token "$HERMES_TOKEN"

lint:

stage: lint

extends: .hermes-base

script:

- hermes lint agents/ flows/ eval.yaml

unit:

stage: test

extends: .hermes-base

script:

- hermes test agents/ --suite unit --fail-fast

- hermes test agents/ --suite contract

smoke-eval:

stage: smoke

extends: .hermes-base

timeout: 10m

script:

- hermes eval run eval.smoke.yaml

--baseline baselines/smoke-main.json

--gate completion_rate_delta=-0.05

--gate latency_p95_max=6.0

--out reports/smoke-$CI_COMMIT_SHORT_SHA.jsonl

artifacts:

paths: [reports/]

expire_in: 30d

full-eval:

stage: eval

extends: .hermes-base

only: [main]

timeout: 45m

script:

- hermes eval run eval.full.yaml

--repeats 3 --parallelism 8

--baseline baselines/full-main.json

--gate completion_rate_delta=-0.02

--gate quality_mean_delta=-0.10

--gate latency_p95_max=6.0

--gate cost_median_max=0.05

--out reports/full-$CI_COMMIT_SHORT_SHA.jsonl

artifacts:

paths: [reports/]

expire_in: 90d

canary:

stage: canary

extends: .hermes-base

only: [main]

when: manual

script:

- hermes deploy agents/my-agent --env staging

- hermes canary start my-agent

--env staging --traffic 1

--shadow-from prod-replay

--duration 1h

- hermes canary verify my-agent

--max-completion-delta -0.03

--max-latency-p95-delta 0.30

--max-cost-delta 0.20

promote:

stage: promote

extends: .hermes-base

only: [main]

when: manual

script:

- hermes promote my-agent --from staging --to prod

--strategy graduated

--steps "5%:30m,25%:1h,50%:2h,100%:stable"

--auto-rollback-on "completion_delta<-0.03,latency_p95_delta>0.30"

The promote job is the whole point: a single command describes the entire graduated rollout plus the rollback rule. Hermes handles the state transitions and the rollback automatically, so your pipeline YAML stays readable.

Staging environments for agents

A staging environment for a stateless web service can be a cheap stub. For agents, staging must be behaviorally indistinguishable from production or you'll miss the regressions you built staging to catch. That means:

- Real models. Staging uses the same model vendor and version as prod. No "we'll use the cheaper model in staging" — that's how you ship a change that works with Sonnet 4.5 but breaks on Sonnet 4.6.

- Real (or faithfully-mocked) tools. External APIs get sandboxed counterparts. Your vector DB in staging is a copy of prod's with the same index. Your secrets vault in staging points at non-prod credentials but the same schema.

- Real traffic, replayed. Snapshot a week of anonymized prod trajectories and replay them against staging on every deploy. This is the single best catch-mechanism for subtle regressions and it's criminally under-used.

- Real observability wiring. Staging exports traces, cost metrics, and guardrail trips to the same stack as prod (separate namespace). See our observability guide for how to wire the traces.

- Isolated blast radius. Staging cannot write to prod databases, send prod emails, or call external state-changing APIs without a whitelist.

Canary deployments for agents

A canary deploy for a web service is usually "route 5% of requests to the new version and watch error rate." For agents you need more dimensions and a longer soak:

- Traffic split by variant tag. Every inbound request gets a variant ID based on a deterministic hash of user or session ID. Log the variant ID on every trace.

- Dual-KPI gating. Canary passes only if both technical metrics (completion rate, latency p95, cost median) and business metrics (user rating, escalation rate, retry rate) stay within the allowed drift window.

- Soak before graduating. Hold each step (5%, 25%, 50%) for long enough to see the slow failures — generally 1 hour minimum, 4 hours for higher-risk changes.

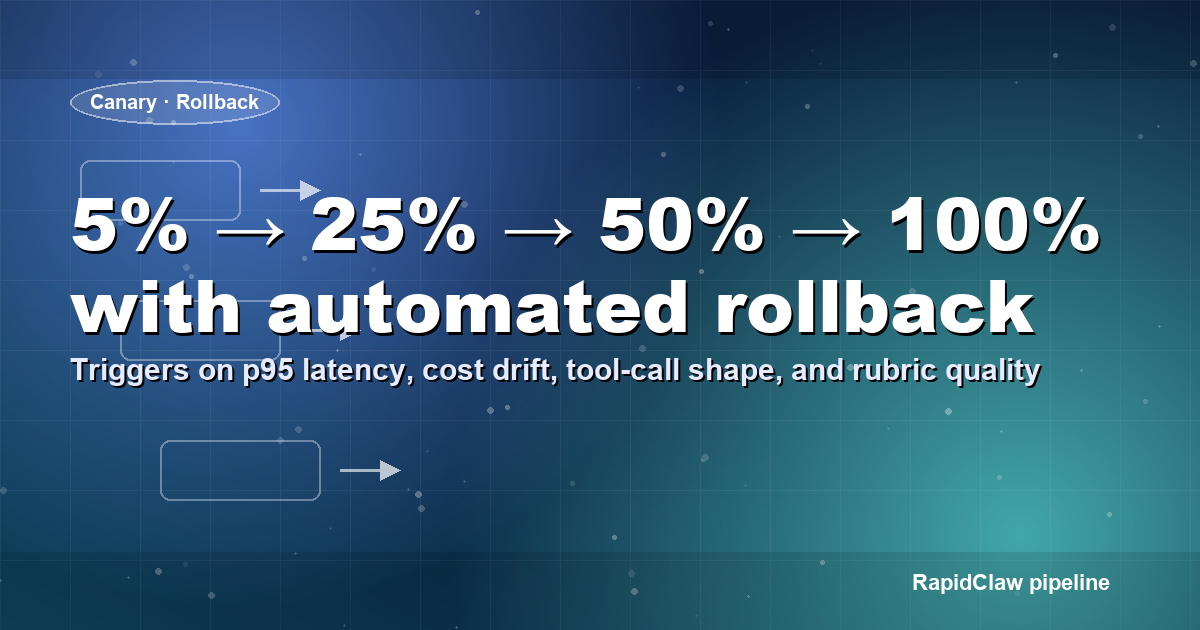

- Graduated rollout. 5% → 25% → 50% → 100% with automated gate checks between each step. Any gate failure triggers automatic rollback.

- Kill switch. A single flag that reverts all traffic to the previous version within 30s. Wire it to your on-call paging tool.

Automated rollback triggers

Rollback discipline is where most teams fall down. The mistake is making rollback a human decision — "we'll see if the numbers recover by morning." By morning you've logged 400,000 bad traces. Write the rollback rules in advance and automate them.

The pattern we use at RapidClaw:

- Completion-rate rule: revert if completion rate on the canary slice drops more than 3 points below the control slice over any 30-minute window with at least 200 tasks.

- Latency-p95 rule: revert if canary p95 latency exceeds control p95 by more than 30% for two consecutive 15-minute windows.

- Cost rule: revert if canary median cost per task exceeds control median by more than 25% with at least 500 tasks.

- Guardrail rule: revert immediately if guardrail-trip count on the canary exceeds 3x the control rate.

- Manual abort: a single

hermes canary abortor GitHub Actions workflow_dispatch that reverts in under 30s, always available, no approval needed.

Every rollback should write an incident-record automatically — timestamp, triggering rule, variant IDs, the final metric values, and a link to the traces. This is how you build a rollback-ledger you can learn from. See why AI agents fail in production for patterns that keep showing up in those ledgers.

Promoting agents through environments

Promotion is the piece that ties the pipeline together: how does a change get from dev → staging → canary → prod? The principle is promote artifacts, not source. You build the agent bundle once (code + prompts + tool configs + model version pin) and that exact bundle moves through the stages. No rebuilding between stages — that's how you introduce non-determinism.

Our reference promotion rules:

- Every merge to

mainbuilds an immutable bundle tagged with the commit SHA and a semantic version. - The bundle auto-deploys to dev and runs the full eval. If green, it becomes eligible for staging.

- Promotion to staging is a single-click action (or merge of a PR that bumps

staging.yaml). It triggers the staging canary workflow. - Promotion to prod requires: (a) staging canary green for 1 hour, (b) two human approvals from the on-call rotation, (c) no open P0 or P1 incident. Hermes/Argo enforce these as required checks.

- Every prod deploy starts at 5% canary. The pipeline cannot skip the canary step; there is no "deploy to 100%" button.

Secrets and model version management

Two sources of silent CI breakage deserve their own section: secret handling and model version management.

For secrets, the only acceptable pattern is short-lived, per-environment credentials injected at deploy time. Your CI runner holds a machine identity (IAM role, workload identity, or OIDC token) that exchanges for the relevant API keys. Never bakeANTHROPIC_API_KEY into an image; never commit even encrypted credentials; never share a single key between dev, staging, and prod. For a deeper dive on agent-specific identity considerations, see AI agent authentication & identity management.

For model versions, pin the exact version string in the agent config (model: claude-sonnet-4-6, not claude-sonnet-latest) and treat model bumps as code changes: open a PR that updates the pin, let the full eval run, only merge if green. This single practice eliminates the most common flavor of "it worked yesterday" incident.

Gate design: what to block on and what to warn on

Every gate you add to the pipeline is a tax on developer velocity. A flaky or over-tight gate will be bypassed, ignored, or disabled within two weeks. The pattern we use: small number of hard blocks, larger number of soft warnings.

Hard blocks (deploy fails, PR can't merge): completion-rate drop greater than 5 points on smoke, 2 points on full; p95 latency exceeds absolute SLO (e.g., 6s for interactive); any guardrail-violation count > 0 on a safety-set run; any unit or contract test failure. Keep the list short — every hard block should earn its place.

Soft warnings (annotate the PR, require acknowledgement, but don't block): cost median up more than 10%, quality rubric mean drop of 0.1–0.2 points, retry rate up, any tagged category regressed by 3+ points (useful early signal before it compounds). Soft warnings train the team to notice drift without creating a gate-fatigue loop. Use the warn variant of the same gate expressions rather than a separate system — one fewer thing to maintain.

Baseline update policy: the baseline advances automatically only when a green run on main has a non-trivial improvement (completion rate up ≥ 1 point, or cost median down ≥ 10%). Otherwise the previous green baseline holds. Automatic baseline advancement on every green run is the single biggest cause of slow-cooking regressions: you'll ratchet your quality floor down 0.5 points at a time until you're a full grade lower than where you started, with no individual gate ever failing.

Pipeline cost economics

A realistic agent CI/CD pipeline runs in the $500–$3,000/month range for a mid-sized team. The cost breakdown most people miss:

- Smoke eval on every PR: at $1–3 per run and 30 PRs/week, that's $120–360/month. Cheap — keep it.

- Nightly full eval on main: at $30–80 per run, that's $900–2,400/month. The line item that gets questioned — don't cave. It is the single highest-ROI thing you spend.

- Canary compute: usually negligible since you're serving production traffic anyway; the incremental cost is the shadow/replay step, typically $50–200/month.

- Engineer time: the biggest hidden cost. Expect 40–80 engineering hours up front to stand up the first version and 5–15 hours/month to maintain. Budget it or the pipeline won't survive year two.

At the small end, skip the nightly full eval and run it weekly instead — you'll catch regressions 6 days late but save ~$2k/month. At the large end, running the full eval on every main merge is a legitimate choice if your deploy velocity justifies it. See our agent token-cost analysis for the underlying economics.

What to build this week

- Pick one agent. Stand up stages 1–3 (lint, unit, smoke eval) in your existing CI provider. Should take half a day.

- Commit a

smoke.jsonlwith 20–30 tasks across your top use cases, plus asmoke-main.jsonbaseline. - Wire the completion-rate-delta gate. Run the pipeline and intentionally break something to confirm the gate fails.

- Set up a staging environment that mirrors prod (same model version, same tool sandboxes) and wire the replay-from-prod mechanism. This is the highest-leverage stage.

- Add the canary + graduated-rollout stage with automated rollback rules. Write the rollback rules down before you ship the pipeline.

- Finally, add the full nightly eval on

mainand the model-version pin discipline.

Using RapidClaw's managed pipeline

You can absolutely roll your own — the YAML above is complete. But by the time you've built the baseline updater, the replay-from-prod service, the cross-run diff viewer, the rollback ledger, and the cost-gated artifact store, you've shipped a small internal platform. RapidClaw's managed hosting bundles all of that on top of your OpenClaw or Hermes agents so your team stays focused on the rubrics and the tasks. See deploy OpenClaw to production and enterprise AI agent deployment for the full stack story.

Related deeper reads: AI agent evaluation benchmarks, AI agent observability, testing AI agents in production, and why AI agents fail in production.

Frequently asked questions

Skip the pipeline build

Rapid Claw runs the deployment, rollback, and monitoring layer for OpenClaw agents. $29/mo to start. Two-founder shop, Bali-based.

See Rapid Claw pricing