GDPR & EU AI Act Compliance for AI Agents

April 20, 2026·18 min read

On 2 August 2026 the EU AI Act’s high-risk rules, transparency obligations, and general-purpose AI enforcement powers become fully applicable. If your AI agent touches EU users or runs on EU personal data, the overlap with GDPR turns every tool call into a compliance event. This guide covers risk classification, documentation, logging, and a production checklist teams can actually ship against.

TL;DR

EU AI Act compliance for AI agents enforces from 2 August 2026. Most enterprise agents land in the high-risk tier if they make decisions about hiring, credit, insurance, healthcare, or public services. Requirements: Annex IV technical documentation, Article 12 automatic event logging (6-month minimum retention), Article 14 human oversight, Article 9 risk management, and transparency under Article 50. GDPR Article 22 layers on top whenever the agent makes solely automated decisions with legal effects. Penalties: up to €15M or 3% of worldwide turnover. Rapid Claw ships the infrastructure controls (EU residency, immutable logs, audit trails, DPA) that let teams focus on model-level governance instead of rebuilding compliance plumbing.

Need EU-compliant agent hosting before August?

Deploy on Rapid ClawTable of Contents

1. The 2 August 2026 Deadline: What Actually Changes

The EU AI Act entered into force on 1 August 2024. Prohibited practices (Article 5) and AI literacy duties (Article 4) became applicable in February 2025. Obligations for providers of general-purpose AI (GPAI) models took effect on 2 August 2025. The remaining — and most consequential — wave of obligations becomes applicable on 2 August 2026, and that is the deadline every team shipping AI agents into the European market needs to plan around.

From that date, the Commission can impose fines on GPAI providers, Member State authorities begin enforcing the high-risk rules for Annex III systems, notified bodies begin issuing conformity assessments where required, and transparency obligations under Article 50 apply end-to-end. Missing the deadline on a high-risk agent exposes you to administrative fines of up to €15 million or 3% of worldwide annual turnover, whichever is higher. For prohibited practices, the ceiling is €35M or 7%.

There is one commonly misread carve-out: Article 6(1)’s classification rule for high-risk AI systems used as safety components of regulated products (Annex I) applies from 2 August 2027. But if your agent falls under Annex III (most autonomous enterprise agents), the 2026 date is binding. Don’t assume you have an extra year.

| Date | What Applies |

|---|---|

| 2 Feb 2025 | Article 5 (prohibited AI), Article 4 (AI literacy) |

| 2 Aug 2025 | GPAI provider obligations, governance bodies operational |

| 2 Aug 2026 | Annex III high-risk rules, Article 50 transparency, Commission GPAI enforcement, Member State supervision |

| 2 Aug 2027 | Article 6(1) for Annex I safety-component systems; legacy GPAI models placed on the market before Aug 2025 must be compliant |

2. Risk Classification for Autonomous Agents

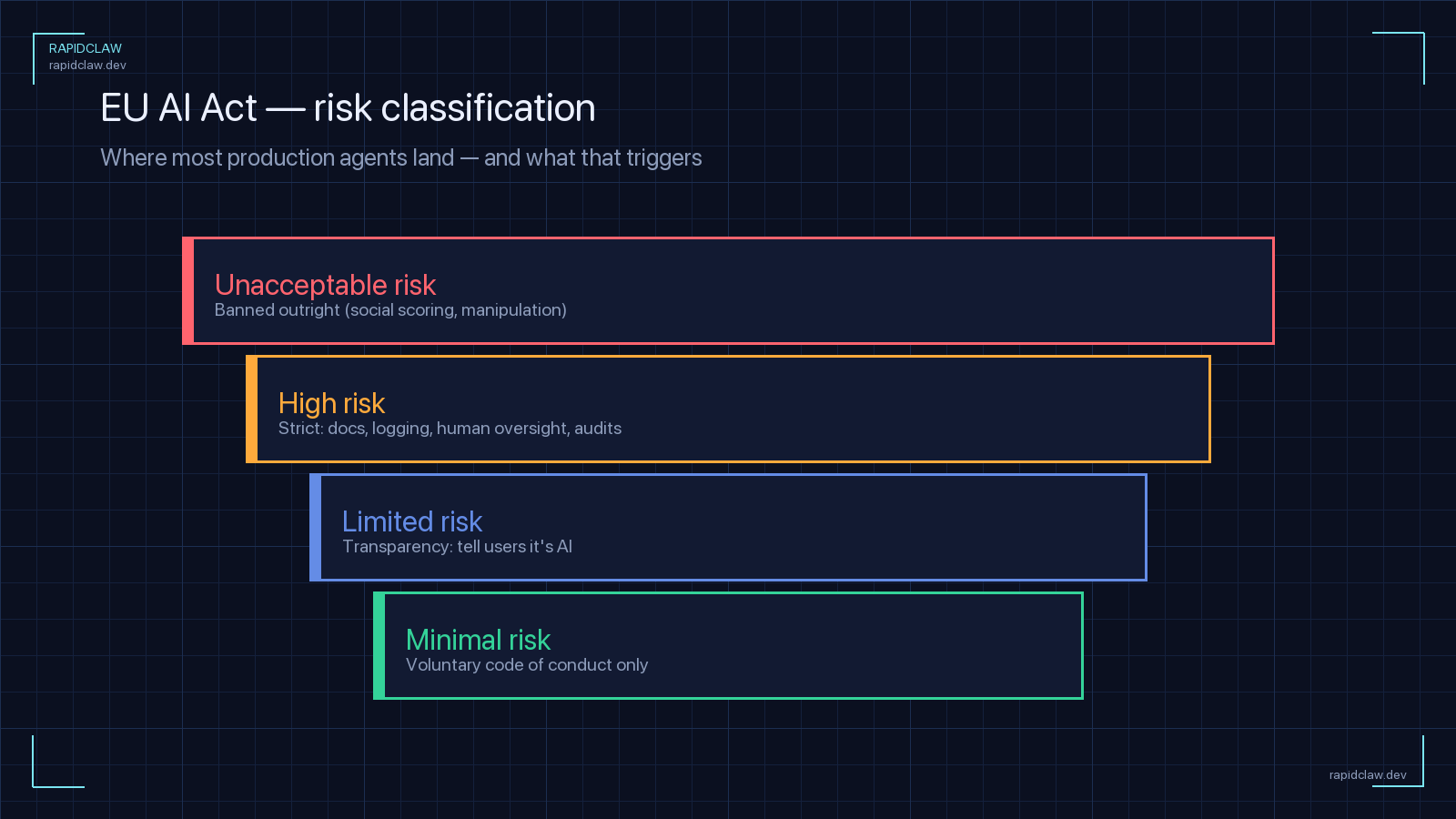

The Act’s risk-based structure has four tiers: prohibited (Article 5), high-risk (Article 6 and Annex III), limited risk (transparency only, Article 50), and minimal risk (no specific obligations). For autonomous agents the honest read is: most enterprise agents that do real work are high-risk. Simple content-generation assistants and consumer chat sit in limited risk; agents that drive decisions about people usually do not.

Annex III lists the use cases that pull an AI system — including an agent — into the high-risk category. Any of these applies to agents as much as to classic ML pipelines:

Employment & HR

CV screening, performance scoring, termination decisions, task allocation

Credit & insurance

Creditworthiness scoring, insurance pricing, fraud evaluation (outside legally exempt anti-fraud contexts)

Education & training

Admission scoring, exam grading, proctoring, student monitoring

Essential services

Eligibility for public benefits, emergency triage, utilities access

Law enforcement

Risk assessment of persons, evidence evaluation, profiling

Migration & border control

Visa risk assessment, biometric identification at borders

Administration of justice

Case-law research tools that directly influence rulings, dispute-resolution assistants

Biometric ID & categorisation

Remote biometric identification, biometric categorisation, emotion recognition

Agents complicate classification because they compose capabilities. A single agent may book meetings (minimal risk), draft emails (limited risk), and then automatically adjust salary-review recommendations (high-risk, Annex III HR). If any tool call or sub-agent lands in a high-risk area, the entire system inherits the high-risk obligations. You can’t carve out a compliant core and leave the tool layer unregulated.

Derogation trap: Article 6(3) lets providers of Annex III systems self-declare non-high-risk in narrow cases (procedural-only, accessory tasks, preparatory assessments, or pattern detection without replacing human judgement). If you rely on the derogation you must still register the system in the EU database and document the rationale. Regulators are explicit that they will challenge weak self-assessments — don’t use it as an escape hatch.

3. GDPR Overlap: Article 22 and Agent Processing

The EU AI Act does not replace GDPR — it sits on top of it. Wherever your agent processes personal data of EU residents you are simultaneously a controller or processor under GDPR, and every GDPR requirement (lawful basis, purpose limitation, data minimisation, DPIA, records of processing, breach notification, data-subject rights) applies in parallel. For AI agents, the two regulations intersect most sharply at four points: Article 22, DPIAs, Article 28 processor contracts, and international transfers.

Article 22 — solely automated decisions

Article 22 of GDPR gives data subjects the right not to be subject to decisions based solely on automated processing that produce legal effects or similarly significantly affect them. Three lawful bases let you override the default:

Even with a lawful basis you must provide human intervention, let the subject express their view, and provide a mechanism to contest the decision. The EDPB and national DPAs have been explicit: a human who rubber-stamps the agent’s output is not meaningful human involvement. The reviewer must have authority, competence, and actual time to override.

This is where agent architecture matters more than the model. If you run a LangGraph or CrewAI flow where one agent decides and another “approves” with a rubber-stamp prompt, you are still making a solely automated decision under Article 22. Designing the oversight step as real human review — with the ability to inspect the inputs, memory, and tool trajectory — is the only defensible pattern.

DPIA triggers

Article 35 of GDPR requires a Data Protection Impact Assessment whenever processing is likely to result in high risk. For AI agents, the triggers are almost always met: systematic monitoring, automated decisions with significant effects, large-scale processing of special-category data, and novel technology use. Do the DPIA before you ship — and keep it updated as the agent’s tool surface changes.

Article 28 processor contracts

If you run an agent on a managed platform, the platform is your processor. You need a Data Processing Addendum (DPA) under Article 28 that specifies subject matter, duration, purpose, data types, data-subject categories, and the processor’s obligations around confidentiality, security, sub-processors, and assistance with audits. Model providers that handle prompts containing personal data are sub-processors in this chain — you must disclose them and ensure flow-down DPAs exist.

4. Annex IV Technical Documentation

Article 11 requires providers of high-risk systems to draw up technical documentation before placing the system on the market and to keep it current. Annex IV lays out the minimum contents. For AI agents, the documentation has to cover both the underlying model and the agent scaffolding (tools, memory, orchestration) as a single system.

| Section | What to Document for an AI Agent |

|---|---|

| System description | Intended purpose, versions, users, hardware, all tools the agent can call, memory store schema |

| Design specifications | Model choice, prompting strategy, tool-routing logic, guardrails, stop conditions |

| Data & data governance | Training/validation/test datasets, provenance, preprocessing, bias controls, retention |

| Risk management (Art. 9) | Foreseeable risks from tool misuse, prompt injection, data leakage, hallucinated actions; mitigations |

| Performance metrics | Accuracy, robustness, fairness metrics; tool-call success rates; trajectory-level eval results |

| Human oversight | Where humans intervene, the UI they use, escalation criteria, training plan for reviewers |

| Post-market monitoring | Telemetry, incident reporting, drift detection, complaint handling, retraining triggers |

| Logs | Sample log records, retention, integrity mechanism, access procedures |

Two agent-specific points regulators are pushing on. First, the tool inventory must be complete and version-controlled — each tool is a potential harm vector and needs its own risk assessment. Second, prompts and system messages count as design specifications; if you change the system prompt mid-release, that is a system change under Article 11 and documentation must be updated. Treat prompts like code.

5. Article 12 Logging & Audit Trails

Article 12 requires high-risk systems to automatically record events (“logs”) over the lifetime of the system, with capabilities that enable (a) identification of situations where the system may present a risk, (b) post-market monitoring, and (c) operational monitoring by deployers. Article 26(6) makes deployers responsible for retaining the logs for at least six months, longer where Union or Member State law requires (six years for healthcare under HIPAA analogues, for example).

For an autonomous agent “event” goes far beyond a request/response pair. At minimum, record:

Logs have to be tamper-resistant. A practical approach is append-only storage with hash chaining or a WORM object-lock bucket, plus signed log shipping. If you’re building on OpenClaw or Hermes, our AI agent observability guide walks through the telemetry stack, and the SOC 2 & HIPAA compliance guide covers immutable log infrastructure that happens to satisfy Article 12 as a by-product.

One GDPR-flavoured nuance: your Article 12 logs will inevitably contain personal data (user IDs, prompt content, tool outputs). That makes the log store itself a processing activity subject to GDPR. You need a retention policy that balances AI Act (6 months minimum, longer if required) against GDPR storage limitation (Article 5(1)(e)) — practically, 12 months for most enterprise agents is defensible, with aggressive redaction of raw prompt content after 90 days unless you have a concrete monitoring reason to keep it.

6. Human Oversight (Article 14)

Article 14 requires providers of high-risk systems to design them so that humans can effectively oversee operation. This is the single control that tends to catch agent teams off-guard, because autonomy is usually the point of the product. The regulation doesn’t demand that a human approves every action — it demands that a human can intervene, correct, or stop.

For autonomous agents, the minimum baseline is:

If you’re running completely unattended flows — a nightly agent that rewrites onboarding emails, for example — the oversight control can be asynchronous: daily review of trajectories, spot-check sampling, and a rollback procedure. What isn’t acceptable is no oversight in the loop.

7. Transparency Obligations (Article 50)

Article 50 applies to every AI agent, not just high-risk ones. Four obligations matter for agent builders:

50(1) — Interaction disclosure

When a natural person interacts with an AI system, they must be informed unless the context makes it obvious. For chat and voice agents, put the disclosure in the first turn and in surfaces where the user can re-check it.

50(2) — AI-generated content labelling

Providers of systems that generate synthetic audio, image, video, or text must embed machine-readable markers (e.g., C2PA, watermarking) identifying the content as AI-generated. This hits agent products that auto-create assets — marketing copy, product imagery, generated reports.

50(3) — Emotion & biometric categorisation

Deployers of emotion-recognition or biometric categorisation systems must notify users. If your agent scores call sentiment or infers demographic categories, this rule applies.

50(4) — Deepfake and public-interest text

Deepfakes must be disclosed. AI-generated text on matters of public interest published to inform the public must be labelled, with narrow editorial exceptions.

8. Data Residency & International Transfers

GDPR Chapter V governs transfers of personal data outside the EEA. You need an adequacy decision (US–EU Data Privacy Framework for certified US importers, UK, Switzerland, etc.), Standard Contractual Clauses plus a Transfer Impact Assessment, or one of the other Article 46 safeguards. The EU AI Act doesn’t add a new rule here, but its documentation and logging obligations make the transfer surface very visible to regulators.

For AI agents, data leaves the EU at multiple points even if your primary deployment is in Frankfurt:

Most EU customers now contractually require EU-region inference plus EU-region storage for agent memory, logs, and tool outputs. Anthropic, OpenAI, Google, and Mistral all offer EU endpoints; you have to configure them explicitly. If you’re on a managed agent platform, verify that memory, session state, and audit logs do not cross the border — and that the DPA names every sub-processor.

9. Compliance Checklist for Teams Deploying AI Agents

Work through this checklist in the order listed. Items marked AI Act come from the EU AI Act; items marked GDPR come from GDPR; many apply to both. Teams that don’t have an in-house compliance lead can have a senior integrator drive most of the items below to completion via our managed compliance build.

10. How Hosting Helps You Ship on Time

A realistic assessment: standing up the infrastructure side of this checklist from scratch takes a small team 4–6 months. That is most of the time you have left. The compliance work that has to be yours — risk classification, DPIA, human-oversight design, tool inventory, documentation — still takes weeks even with infrastructure handled. Managed hosting is how you claw back the buildout time.

Rapid Claw handles the platform-level controls that the EU AI Act and GDPR require. Concretely:

| Requirement | What Rapid Claw Ships by Default |

|---|---|

| Article 12 logging | Per-agent event logs (tools, model calls, memory, human overrides) with hash chaining |

| Log retention | Default 12 months, configurable to 6 years; WORM object lock for regulated tenants |

| Audit trail | Immutable record of every config, prompt, and RBAC change; signed export for regulator requests |

| EU data residency | Frankfurt and Dublin regions with sealed cross-region replication; EU-endpoint routing for Claude, GPT, Mistral |

| GDPR Article 28 DPA | Signed DPA with flow-down to every sub-processor; public sub-processor list |

| Human oversight UX | Per-tool approval policies, stop-the-agent controls, trajectory inspector, reviewer audit trail |

| Transparency (Art. 50) | First-turn AI disclosure, C2PA embedding for generated media, configurable watermarks |

| Documentation | Annex IV template populated from your deployment, plus exportable tool inventory and risk register |

For a deeper look at the platform-level security and privacy posture, see the enterprise deployment guide and the AI agent firewall setup article, both of which cover tool-layer controls that map directly onto Article 9 risk management.

Deploy in an EU-compliant environment before August

Rapid Claw runs OpenClaw and Hermes Agent on EU-region infrastructure with immutable logs, human-oversight controls, a signed DPA, and Annex IV documentation templates. Ship the product; the platform handles the regulatory plumbing.